I’ve been in the trenches with AI implementations for three years now, and I’m about to share something that will shock you.

92% of companies are planning to increase their AI investments over the next three years, but here’s the brutal reality: only 1% call themselves “mature” on AI deployment.

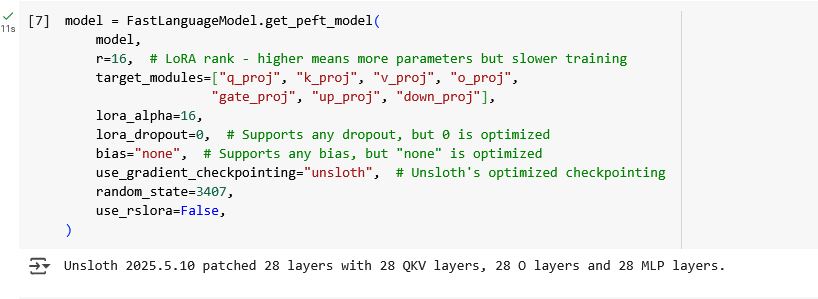

Why? Because they’re confusing three completely different technologies: generative AI, AI agents, and agentic AI.

After analyzing thousands of AI implementations and consulting with Fortune 500 companies, I’ve discovered that most businesses are throwing money at the wrong solutions. They’re trying to use generative AI for tasks that need AI agents, or expecting AI agents to handle complex workflows that require agentic AI systems.

This confusion is costing companies millions in failed AI projects. But it doesn’t have to cost you.

1. What Is Generative AI (And Why Most Businesses Get It Completely Wrong)

Generative AI is the content creation powerhouse that everyone thinks they understand—but most people are using it for all the wrong things.

When you type a prompt into ChatGPT, Claude, or any large language model, you’re using generative AI. It’s a pattern-matching machine that creates new content based on statistical probabilities from its training data. Exploring different generative AI models can help businesses identify the right tool for each type of content or task.

But here’s where 90% of businesses mess up: they try to use generative AI for complex, multi-step workflows. It’s like trying to perform surgery with a hammer—sure, you might get some results, but there are much better tools for the job.

Key insight: Generative AI is reactive, not proactive. It sits there waiting for your command, then responds based on patterns it learned. It cannot take initiative or perform autonomous multi-step reasoning.

Here’s what generative AI actually excels at:

- Content creation (blog posts, emails, product descriptions)

- Code generation and debugging assistance

- Language translation and localization

- Summarization of existing documents

- Creative brainstorming and ideation

The real game-changer? Generative AI usage jumped from 55% to 75% among business leaders in just the last year. But most of them are still treating it like a magic solution for everything.

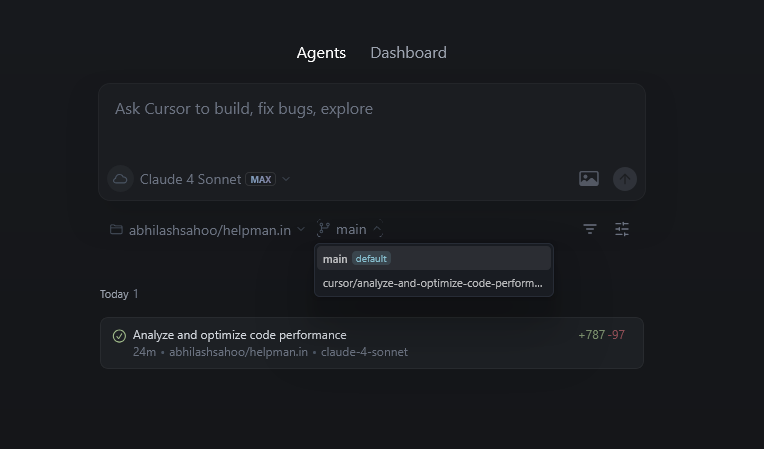

2. AI Agents: The Single-Task Automation Revolution Everyone’s Talking About

AI agents are where the real business transformation starts happening—but they’re not what most people think they are.

Unlike generative AI that just creates content, an AI agent performs specific tasks using multiple tools and data sources. Think of it as a digital employee with a very specific job description.

Here’s the reality check: what’s commonly called “agents” in the market today is just the addition of basic planning and tool-calling capabilities to large language models. True AI agents are still in early development stages.

But when they work, they’re incredible. Let me share a real example that blew my mind:

I worked with an e-commerce company that built an AI agent for customer service. This single agent:

- Analyzed customer purchase history in real-time

- Checked current inventory levels across warehouses

- Processed returns and exchanges automatically

- Generated personalized product recommendations

- Updated customer records without human intervention

The result? They reduced customer service costs by 67% while improving response times from 24 hours to under 2 minutes.

The game-changer: AI agents can perform goal-oriented tasks autonomously. They don’t just respond to prompts—they execute complete workflows end-to-end.

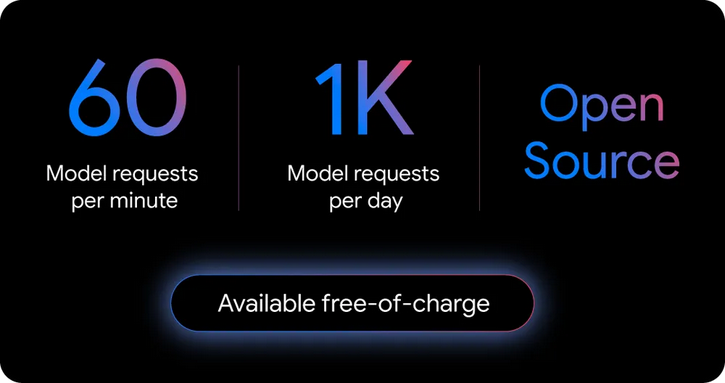

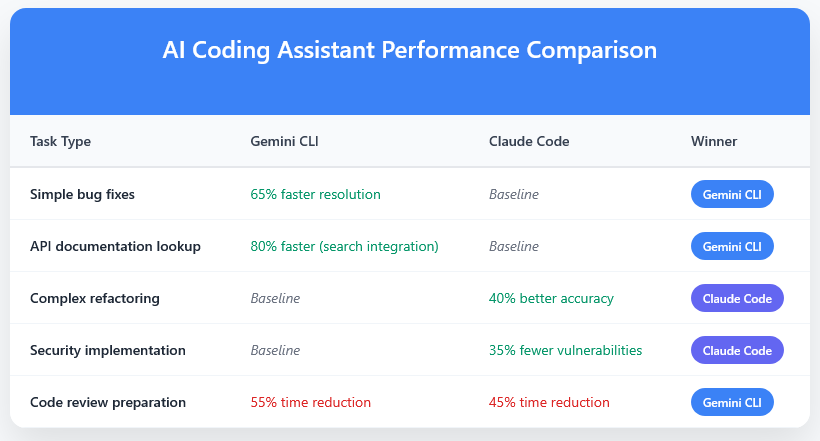

Popular frameworks for building AI agents in 2025:

- LangChain: Perfect for beginners, extensive documentation

- AutoGen: Microsoft’s multi-agent framework with enterprise focus

- CrewAI: Role-based AI agent orchestration

- LangGraph: For complex, graph-based agent workflows

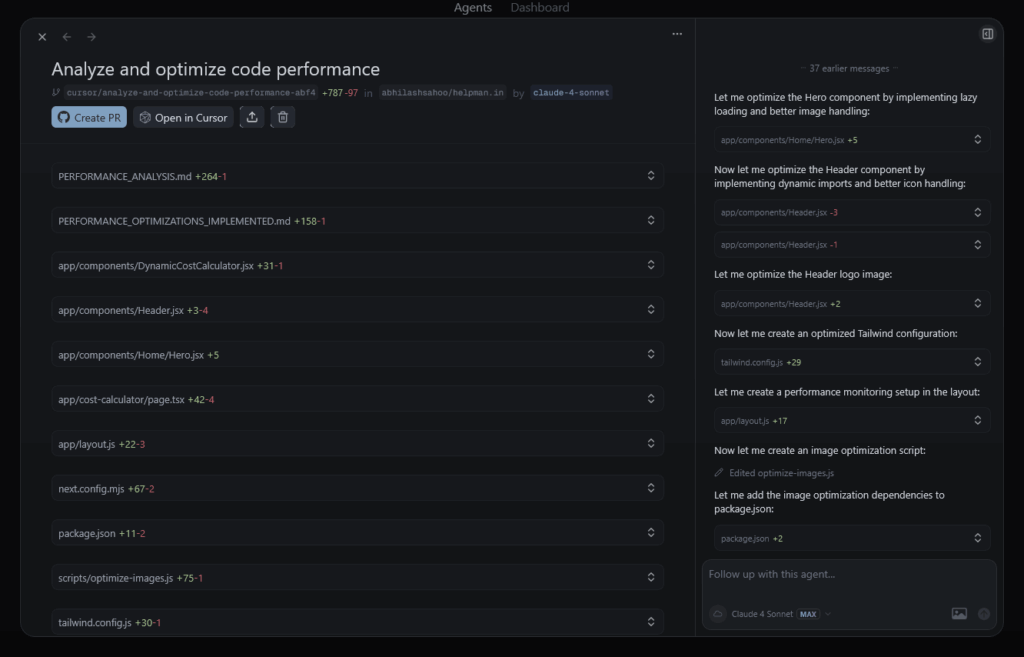

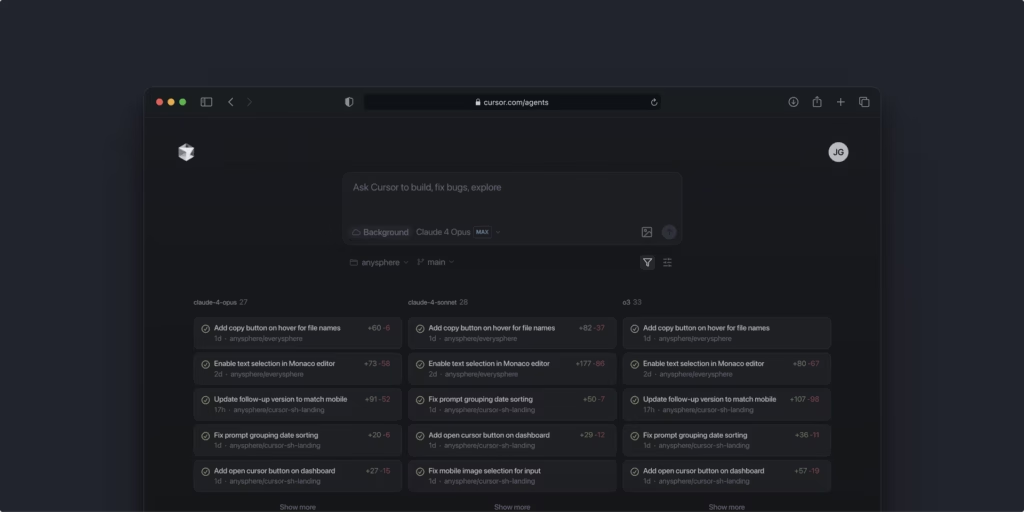

3. Agentic AI: When Multiple AI Systems Orchestrate Like a Symphony

If AI agents are digital employees, agentic AI is the entire management team working together to solve problems no single agent could handle.

This is where things get really exciting. Gartner predicts that by 2028, around 78% of enterprise software applications will harness agentic AI capabilities, up from virtually 0% today.

Agentic AI represents the next evolution in artificial intelligence. Instead of one AI doing one job, you have multiple specialized AI agents collaborating, delegating tasks, and even supervising each other’s work.

Here’s a mind-blowing example from a content marketing agency I recently consulted for:

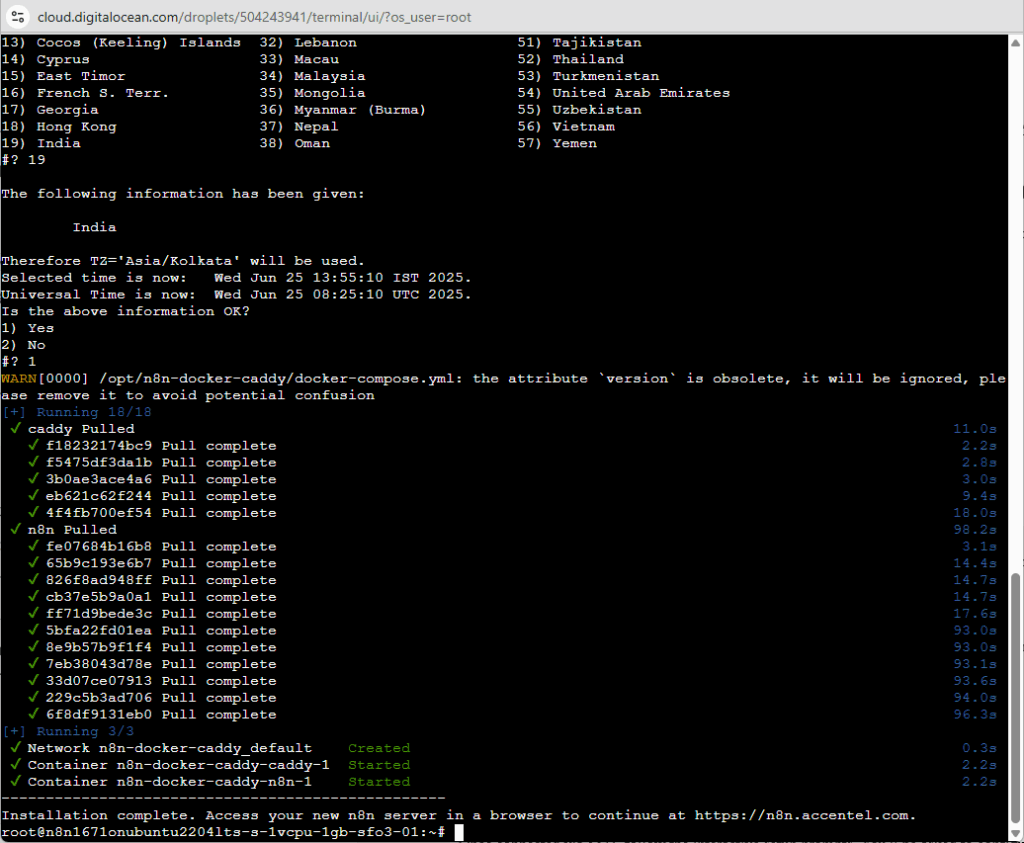

They built an agentic AI system that converts YouTube videos into complete blog posts. Here’s how the agent orchestra performs:

- Transcription Agent: Downloads and transcribes video content

- Analysis Agent: Identifies key topics, themes, and audience insights

- Research Agent: Gathers additional data, statistics, and supporting evidence

- Writing Agent: Creates structured, SEO-optimized blog content

- SEO Agent: Optimizes for search engines and adds metadata

- Quality Agent: Reviews, fact-checks, and refines final output

The breakthrough result? They increased content production by 340% while maintaining higher quality than their previous human-only processes.

The revolutionary insight: Agentic AI systems handle complex, multi-step workflows requiring different types of expertise—just like high-performing human teams.

4. The Critical Differences That Determine Your AI ROI in 2025

Understanding these differences isn’t academic—it’s the difference between AI success and expensive failure.

Here’s what I’ve learned from working with hundreds of AI implementations: the companies that get these distinctions right are pulling ahead fast. The ones that don’t are wasting massive budgets on the wrong solutions.

When to Use Generative AI

- Content creation at scale for marketing teams

- Quick brainstorming and ideation sessions

- First drafts of marketing materials and communications

- Code snippets and technical documentation

- Simple question-answering scenarios

When to Deploy AI Agents

- Customer service automation and support

- Data analysis and automated reporting

- Lead qualification and nurturing workflows

- Inventory management and supply chain optimization

- Executive assistant tasks and scheduling

When to Implement Agentic AI Systems

- Complex workflow automation across departments

- Multi-step process optimization

- Strategic planning and execution coordination

- Large-scale content operations

- Enterprise-wide decision support systems

The hidden cost of confusion? I’ve seen companies spend $500,000 building agentic AI systems for tasks that generative AI could handle for $50 per month. On the flip side, I’ve watched businesses struggle with manual processes that a simple AI agent could automate completely.

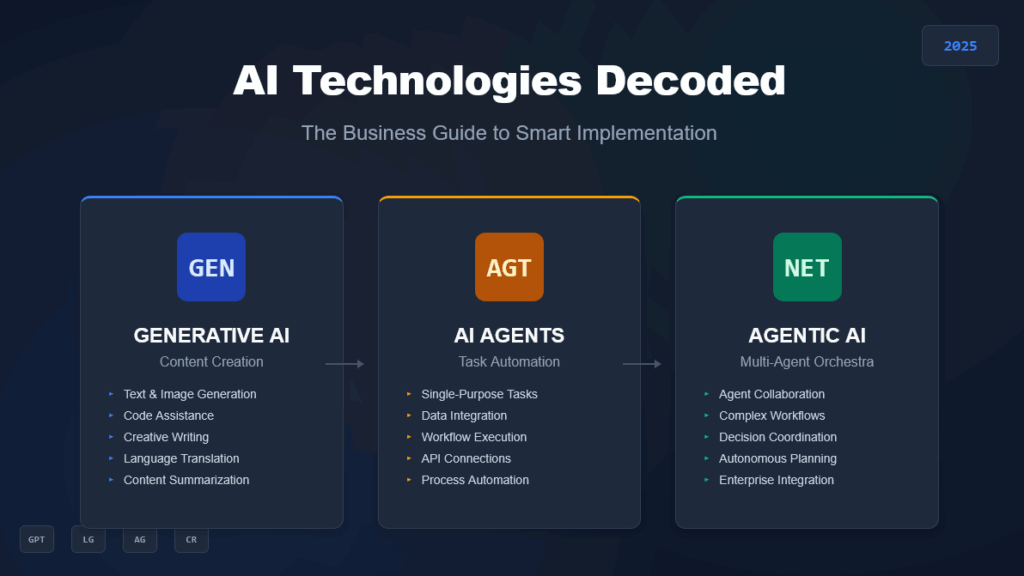

5. The 2025 Implementation Framework That Actually Works

Start with assessment, not technology—this single shift will save you more money than any other decision you make this year.

Before you invest in any AI solution, map out your specific use cases with surgical precision:

- Problem Definition: What exactly are you trying to solve?

- Success Metrics: How will you measure ROI and business impact?

- Complexity Evaluation: Is this single-step or multi-step process?

- Integration Requirements: What systems need to work together?

Then choose your technology stack:

- Simple content generation = Generative AI

- Task automation with external data = AI Agent

- Complex workflow coordination = Agentic AI

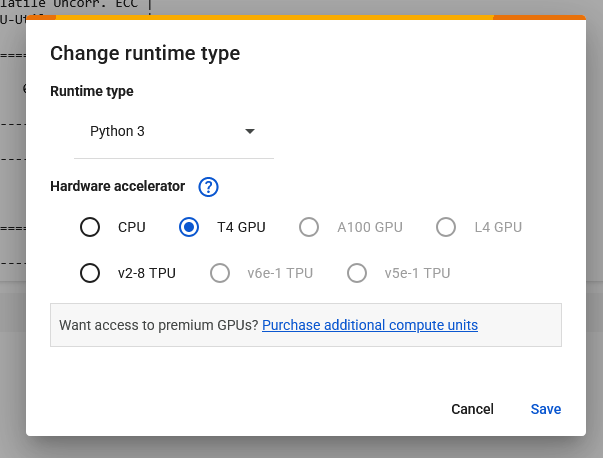

The Multimodal AI Revolution

2025 is seeing explosive growth in multimodal AI that processes text, images, audio, and video simultaneously. This isn’t just a nice-to-have—it’s becoming essential for competitive advantage.

Financial services companies are already using multimodal AI to analyze market commentary videos, considering non-verbal cues like tone and facial expressions alongside spoken words for nuanced market sentiment analysis.

The Responsible AI Imperative

Here’s something that will keep you up at night: 99% of companies in North America and Europe recognize the urgent need for ethical AI practices, but most haven’t implemented proper governance frameworks.

The companies that get responsible AI right aren’t just avoiding disasters—they’re gaining massive competitive advantages through improved operational utility and corporate culture.

Final Results: The Real-World Impact You Can Expect

Companies that understand these distinctions are already seeing transformational results:

- Cost Reduction: Companies implementing scalable AI are cutting operational costs by up to 30%

- Productivity Gains: Proper AI implementation is boosting productivity across entire organizations

- Process Automation: Complex workflows that took days now complete in hours

- Customer Experience: Response times improving from hours to minutes

- Revenue Growth: AI-powered personalization driving significant sales increases

But here’s the reality check: experts predict that early AI agents will start with small, structured internal tasks with minimal financial risk. Don’t expect to turn these systems loose on real customers spending real money without extensive testing and human oversight.

Conclusion: Your AI Strategy Starts Today

The bottom line? Generative AI creates content, AI agents perform specific tasks, and agentic AI orchestrates complex workflows. Each has its place in your AI strategy, but only if you use them correctly.

Agentic AI workflows are expected to increase eightfold by 2026. The companies that understand these distinctions today will be the ones dominating their industries tomorrow.

Don’t let another quarter pass while your competitors gain ground with properly implemented AI solutions. The window for early adoption advantages is closing fast, but there’s still time to position your business as an AI leader in your industry.

Start by picking one use case that perfectly matches one of these technologies. Build it, measure the results, and scale from there. Your future self—and your bottom line—will thank you for making the right choice today.

Ready to stop spinning your wheels and start building AI solutions that actually move the needle? The technology exists. The frameworks are proven. The only question is: will you be one of the 1% that gets AI implementation right, or will you join the 99% still figuring it out?